Introduction: Why Frameworks Matter in 2026

In 2023, building an AI agent meant wrapping GPT-4 in a while loop with tool calling. By 2026, that approach collapses in production. Real agents need state management, error recovery, human-in-the-loop workflows, multi-agent coordination, observability, and deployment infrastructure. Frameworks now handle the complexity that killed most 2023-2024 agent experiments.

The conversation has shifted from "should we use AI agents?" to "which framework survives production load?" This guide covers the frameworks that made it through the valley of death—those with active communities, production deployments, and honest limitations.

Who this guide is for:

- Developers evaluating which code-first framework to commit to for a 6-12 month project

- CTOs and engineering leaders making strategic platform decisions for their teams

- Platform teams deciding between self-hosted frameworks and managed enterprise solutions

- Anyone who has watched a demo work perfectly and then failed silently in production

Framework Categories: Understanding the Landscape

AI agent tools fall into four distinct categories. Choosing the wrong category creates more pain than choosing the wrong tool within a category.

No-Code/Low-Code Builders

Platforms like YourGPT, Voiceflow, and Botpress offer visual interfaces for designing agent workflows. You define conversation paths, connect knowledge sources, and deploy without writing code.

When to use: Standard use cases (customer support, lead qualification, FAQ automation), teams without dedicated AI engineers, rapid prototyping before committing to custom development.

Limitations: Custom logic requires workarounds or API extensions. Debugging is harder when you cannot inspect the code. Vendor lock-in is real—migrating away requires rebuilding.

Code-First Frameworks

LangGraph, CrewAI, Microsoft Agent Framework, and OpenAI Agents SDK give you code-level control. You define agents, tools, state machines, and orchestration in Python or TypeScript.

When to use: Complex workflows requiring custom logic, unique integrations not covered by no-code platforms, applications where observability and debugging are critical, teams with strong engineering capacity.

Advantages: Full control, version control, testability, portability between cloud providers, ability to debug production issues.

Enterprise Platforms

Microsoft Copilot Studio and Google Vertex AI Agent Builder offer managed infrastructure with enterprise compliance, security controls, and integration with existing enterprise systems.

When to use: Organizations with Microsoft 365 or Google Cloud commitments, regulated industries requiring compliance certifications, large-scale deployments where managed infrastructure reduces operational burden.

Specialized Agents

Coding agents (GitHub Copilot, Cursor), voice agents (dedicated voice platforms), and domain-specific agents optimized for particular use cases.

When to use: When your use case matches the specialization exactly. Generic frameworks add complexity for these narrow use cases.

Deep-Dive: Code-First Frameworks

This section covers the frameworks developers evaluate for production agent systems. Each assessment includes architecture, strengths, production considerations, GitHub data, real use cases, pricing, community size, and known limitations.

LangGraph: Production-Grade Stateful Agents

Architecture: LangGraph models agents as state machines built on directed graphs. Each node represents a computation step; edges define transitions based on conditions. State is explicitly defined and passed between nodes, making agent behavior deterministic and debuggable.

The framework emerged from LangChain in 2024 as the team recognized that chains (linear compositions) could not handle production agent complexity. LangGraph 2.0, released February 2026, codifies three years of production patterns into a mature framework.

Key concepts:

- StateGraph: The core abstraction. Define state schema, add nodes (functions), add edges (transitions), compile into a runnable.

- Checkpoints: Persist state at any point. Resume execution from any checkpoint—critical for long-running agents.

- Memory: Built-in support for conversation memory, thread-level persistence, and cross-session state.

- Human-in-the-loop: Pause execution for human review, inject feedback, continue from interruption.

Production Strengths:

- Observability: Every state transition is traceable. LangSmith integration provides production monitoring, debugging, and evaluation.

- Testability: Graphs can be unit tested node by node. State is explicit, making assertions straightforward.

- Error recovery: Checkpointing means you never lose work. Resume from the last successful state after failures.

- Scale: Used by Uber, LinkedIn, Klarna for production workloads. 34.5 million monthly downloads suggest real production adoption.

GitHub Data (May 2026):

- Stars: ~24,800

- Monthly downloads: 34.5 million

- Open issues: 264

- Closed issues: 1,015

- Pull requests: 246 open, 3,619 merged

- Releases: 504 (latest: langgraph-cli==0.4.24)

- Contributors: 200+

Real Use Cases:

- Uber: Multi-step rider support automation with handoff to human agents

- LinkedIn: Content moderation workflows requiring complex state management

- Klarna: Financial services chatbot with compliance checkpoints

Pricing: Open source (MIT license). LangSmith (observability platform) has free tier for development, paid tiers for production.

Known Limitations:

- Learning curve: Requires understanding graph-based state machines. Developers accustomed to linear thinking need 2-4 weeks to become productive.

- Verbosity: Simple agents require more boilerplate than CrewAI. The trade-off is explicit control.

- LangChain dependency: While LangGraph can work independently, most documentation assumes LangChain ecosystem. Pure LangGraph requires more setup.

Best Fit: Complex multi-step workflows, applications requiring human-in-the-loop, teams prioritizing production observability, projects where agent behavior must be auditable.

# LangGraph minimal example - stateful agent

from langgraph.graph import StateGraph, END

from typing import TypedDict

class AgentState(TypedDict):

messages: list

context: dict

next_action: str

def process_query(state: AgentState) -> AgentState:

# Your processing logic

return {"next_action": "respond"}

def respond(state: AgentState) -> AgentState:

# Generate response

return {"next_action": END}

workflow = StateGraph(AgentState)

workflow.add_node("process", process_query)

workflow.add_node("respond", respond)

workflow.add_edge("process", "respond")

workflow.set_entry_point("process")

app = workflow.compile()

AutoGen and Microsoft Agent Framework

Important: AutoGen entered maintenance mode in 2026. Microsoft recommends Microsoft Agent Framework (MAF) for new projects. This section covers both because existing AutoGen deployments still exist.

AutoGen (Legacy)

Architecture: Multi-agent conversation pattern. Agents are defined with roles (AssistantAgent, UserProxyAgent, etc.) and communicate through a conversation manager. The framework pioneered multi-agent orchestration where agents collaborate to solve tasks.

Legacy strengths:

- Multi-agent collaboration is first-class

- Rich conversation patterns (sequential, group chat, hierarchical)

- Code execution in sandboxed environments

Limitations:

- State management less explicit than LangGraph

- Fewer production case studies

- Now in maintenance mode—no new features

Microsoft Agent Framework (MAF)

Architecture: Unified framework merging AutoGen v0.4 and Semantic Kernel, released as GA in April 2026. Designed for enterprise production deployments with Azure integration.

Key features:

- Agent orchestration: Define agents, skills, and workflows

- Azure integration: Native Azure AI Services, Azure Functions, Azure Storage

- Enterprise compliance: Built-in support for enterprise security requirements

- Semantic Kernel integration: Leverage existing SK skills and plugins

Best Fit: Organizations with Microsoft 365/Azure commitments, enterprise deployments requiring compliance certifications, teams already using Semantic Kernel.

Migration note: AutoGen users should plan migration to MAF using Microsoft's migration guide. The frameworks share concepts but have different APIs.

CrewAI: Role-Based Multi-Agent Teams

Architecture: CrewAI models agents as team members with specific roles (Researcher, Writer, Analyst). Agents are organized into "crews" that collaborate on tasks. The framework emphasizes human-intuitive abstractions over low-level graph manipulation.

Built entirely from scratch (no LangChain dependency), CrewAI offers both simplicity for beginners and depth for advanced users through "Crews" and "Flows" paradigms.

Key concepts:

- Agents: Define role, goal, backstory, and tools. Each agent operates autonomously within its defined scope.

- Tasks: Specific objectives assigned to agents with expected outputs.

- Crews: Groups of agents working together. Define process (sequential, hierarchical) for task distribution.

- Flows: Event-driven orchestration for granular control in enterprise deployments.

Production Strengths:

- Rapid development: Working multi-agent systems in hours, not weeks

- Intuitive model: Role-based design matches how teams think about work

- CrewAI Enterprise (AMP): Triggers for Gmail, Slack, Salesforce; deployment management; RBAC

- From prototype to production in a week: Realistic timeline for experienced teams

GitHub Data:

- 100,000+ certified developers through learn.crewai.com

- Active community Discord and documentation

- Enterprise customers include Fortune 500 companies

Production Considerations:

- Simplicity vs. control: Easier to start than LangGraph, but complex state machines require workarounds.

- Less observability tooling: Fewer production monitoring options compared to LangChain ecosystem.

- Rapidly evolving: New features added frequently—can be unstable for production pinning.

Known Limitations:

- Less explicit state: State management is more implicit than LangGraph. Harder to debug specific transitions.

- Fewer edge cases documented: Community is smaller than LangChain, so obscure issues have fewer Stack Overflow answers.

Best Fit: Teams wanting rapid prototyping, use cases where role-based agent teams match the problem, projects prioritizing developer experience over maximum control, organizations needing quick wins before committing to framework investment.

# CrewAI minimal example - role-based team

from crewai import Agent, Task, Crew

researcher = Agent(

role="Research Analyst",

goal="Find accurate information",

backstory="Expert at finding and verifying facts",

tools=[search_tool]

)

writer = Agent(

role="Content Writer",

goal="Create engaging content",

backstory="Skilled at translating complex topics",

tools=[write_tool]

)

research_task = Task(

description="Research the topic",

agent=researcher

)

write_task = Task(

description="Write an article",

agent=writer

)

crew = Crew(

agents=[researcher, writer],

tasks=[research_task, write_task],

process="sequential"

)

result = crew.kickoff()

OpenAI Agents SDK (Formerly Swarm)

Architecture: OpenAI Swarm started as a lightweight educational reference implementation for multi-agent handoffs in 2024. In 2025, it evolved into the OpenAI Agents SDK—a production framework for building agents on OpenAI's platform.

Key features:

- Simplicity: Minimal abstractions, easy to understand code

- Native OpenAI integration: Optimized for GPT models

- Agent handoffs: Clean patterns for transferring between specialized agents

Production Considerations:

- Lightweight by design: Intentionally minimal—fewer batteries included than LangGraph or CrewAI

- OpenAI-centric: Designed around OpenAI models. Using other providers requires adaptation.

- Evolving rapidly: The transition from Swarm to Agents SDK means documentation and patterns are still stabilizing.

Best Fit: Teams committed to OpenAI's ecosystem, simple multi-agent patterns requiring quick implementation, educational purposes and prototyping, projects where minimal code is a feature.

LlamaIndex and Haystack

While primarily RAG (Retrieval-Augmented Generation) frameworks, both have evolved agent capabilities:

LlamaIndex Agents

Strength: Best-in-class data ingestion and retrieval. If your agents need sophisticated document processing, knowledge graph construction, or multi-modal data handling, LlamaIndex provides the foundation.

Agent features: Agent types include ReAct, Structured, and Conversational agents. LlamaIndex integrates with LangChain and can use LangGraph for orchestration.

Best Fit: Applications where RAG is central, teams already using LlamaIndex for document processing, projects needing sophisticated data connectors.

Haystack

Architecture: Pipeline-based framework for building AI applications. Modular architecture with components for retrieval, generation, and agent orchestration.

Strength: Flexible pipeline composition. Apache 2.0 license. Strong in production search and RAG applications.

Best Fit: Teams wanting pipeline flexibility, search-heavy applications, Python-first teams not committed to LangChain ecosystem.

Comparison Matrix: Code-First Frameworks

| Framework | Best For | Learning Curve | Production Ready | Community | Pricing |

|---|---|---|---|---|---|

| LangGraph | Complex stateful workflows, human-in-the-loop | 2-4 weeks | Excellent (Uber, LinkedIn, Klarna) | Large (34.5M downloads/mo) | MIT (Free) |

| CrewAI | Rapid prototyping, role-based teams | 1-2 weeks | Good (Enterprise AMP available) | Growing (100K+ certified) | MIT (Enterprise paid) |

| Microsoft Agent Framework | Microsoft/Azure ecosystem | 2-3 weeks | Excellent (Enterprise GA) | Large (Microsoft backing) | Free (Azure costs apply) |

| OpenAI Agents SDK | OpenAI-centric, simple patterns | 1 week | Evolving | Medium | Free (OpenAI API costs) |

| LlamaIndex Agents | RAG-heavy applications | 2-3 weeks | Good | Large | MIT (Free) |

| Haystack | Pipeline-based search/RAG | 2 weeks | Good | Medium | Apache 2.0 (Free) |

Note: "Learning curve" estimates assume familiarity with Python and LLM concepts. Your team's actual time will vary based on experience.

Enterprise Platforms: Microsoft and Google

For organizations prioritizing managed infrastructure over code-level control, enterprise platforms offer turnkey solutions with compliance and security built-in.

Microsoft Copilot Studio

Overview: Low-code/no-code platform for building agents integrated with Microsoft 365 ecosystem. Part of the Microsoft Power Platform.

Key features:

- Visual agent designer with drag-and-drop workflows

- Native integration with Microsoft 365, Dynamics 365, Azure

- Enterprise compliance (SOC, GDPR, HIPAA)

- Copilot extensibility—enhance Microsoft 365 Copilot with custom capabilities

- Power Automate integration for workflow automation

Pricing: Consumption-based with Microsoft 365 subscriptions. Additional capacity packs available.

Best Fit: Organizations already using Microsoft 365, teams without dedicated AI engineers, regulated industries requiring compliance certifications, internal enterprise applications.

Google Vertex AI Agent Builder

Overview: Managed platform for building conversational agents on Google Cloud, formerly Dialogflow CX with enhanced AI capabilities.

Key features:

- Visual flow designer for conversation paths

- Integration with Google Cloud services (BigQuery, Cloud Storage)

- Enterprise search integration for knowledge retrieval

- Voice and text channels

- Google's Gemini models available

Pricing: Pay-per-request with Google Cloud. Dialogflow CX pricing tiers available.

Best Fit: Organizations with Google Cloud investments, contact center applications, voice-first use cases, teams leveraging BigQuery for knowledge.

Enterprise Platform Comparison

| Platform | Ecosystem | Strength | Compliance | Best For |

|---|---|---|---|---|

| Microsoft Copilot Studio | Microsoft 365, Azure | Office integration, workflow automation | SOC, GDPR, HIPAA | Internal apps, M365 shops |

| Google Vertex AI Agent Builder | Google Cloud | Contact center, voice, enterprise search | SOC, GDPR, HIPAA | Customer support, voice-first |

Decision factor: Choose based on your existing cloud commitment. Migration between enterprise platforms is costly. Neither is ideal if you need code-level control.

Decision Matrix: Choosing a Framework

Framework selection depends on five primary factors: team skills, use case complexity, scale requirements, integration needs, and budget timeline.

By Team Capability

| Team Profile | Recommended | Avoid |

|---|---|---|

| Strong Python engineers, ML experience | LangGraph | No-code platforms |

| Small team, need quick wins | CrewAI | LangGraph (overkill for simple needs) |

| Microsoft-centric org | Microsoft Agent Framework / Copilot Studio | Google ecosystem tools |

| No dedicated AI team | YourGPT, Voiceflow, Copilot Studio | Code-first frameworks |

| Research-heavy, experimentation | LangGraph + LlamaIndex | Vendor-locked platforms |

By Use Case

| Use Case | Primary Recommendation | Alternative |

|---|---|---|

| Customer support automation | YourGPT / Botpress (no-code) | CrewAI for custom logic |

| Complex multi-step workflows | LangGraph | Microsoft Agent Framework |

| Research and content generation | CrewAI | LangGraph |

| Enterprise internal tools | Copilot Studio | LangGraph + internal hosting |

| RAG-heavy applications | LlamaIndex + LangGraph | Haystack |

| Voice agents / contact centers | Google Vertex AI Agent Builder | YourGPT Voice |

| Financial services compliance | LangGraph (auditability) | Enterprise platform |

By Scale and Budget

| Scale | Budget Priority | Recommendation |

|---|---|---|

| Startup / MVP | Minimize engineering time | CrewAI or no-code (YourGPT) |

| Growth stage | Balance control and speed | LangGraph or CrewAI |

| Enterprise | Compliance and reliability | LangGraph with observability OR enterprise platform |

| High volume production | Performance and reliability | LangGraph with checkpointing and monitoring |

Red Flags: When to Reconsider

Not every project needs an agent framework. Consider alternatives if:

- Simple FAQ chatbot: Use a basic chatbot platform. Frameworks add complexity you will not use.

- Single-step tasks: A simple function call with error handling is faster and more reliable.

- No state management needed: Stateless agents can use lighter tooling.

- Team lacks AI engineering experience: Start with no-code, migrate to code-first when complexity demands it.

- Compliance requires audit trail: Ensure your framework supports logging and state persistence. LangGraph excels here.

Implementation Patterns

Production agents require patterns beyond framework basics. These patterns apply across frameworks.

Error Handling and Fallbacks

Every agent call can fail. Production systems handle failures gracefully:

- Retry with exponential backoff: LLM APIs rate limit. Retry logic prevents cascading failures.

- Fallback models: If GPT-4 is unavailable, fall back to GPT-3.5 or Claude. Define fallback chains.

- Graceful degradation: If tools fail, the agent should inform the user rather than hanging.

- Timeout handling: Every external call needs a timeout. Production systems do not wait indefinitely.

# LangGraph error handling pattern

from langgraph.graph import StateGraph

from langgraph.checkpoint.memory import MemorySaver

# Define retry logic

def safe_llm_call(prompt, max_retries=3, fallback_model=None):

for attempt in range(max_retries):

try:

return llm.invoke(prompt)

except RateLimitError:

time.sleep(2 ** attempt)

except Exception as e:

if attempt == max_retries - 1:

if fallback_model:

return fallback_model.invoke(prompt)

raise

return None

# Use checkpoints for state persistence

checkpointer = MemorySaver()

app = workflow.compile(checkpointer=checkpointer)

Human-in-the-Loop Integration

Agents that take actions (send emails, modify records, make decisions) need human approval:

- Checkpoint before action: Persist state, notify human, wait for approval.

- Review interface: Build or use a review UI. LangGraph Studio provides this.

- Timeout on approval: If human does not respond, define default behavior.

- Audit logging: Every approval/rejection should be logged with context.

Monitoring and Observability

Production agents are distributed systems. Observability is not optional:

- Trace every step: Know which node produced which output. LangSmith, LangFuse, or custom logging.

- Track token usage: LLM costs scale with usage. Monitor per-agent, per-conversation costs.

- Measure latency: Each node adds latency. Track p50, p95, p99 response times.

- Alert on anomalies: Sudden cost spikes, latency increases, or error rates require investigation.

- Evaluation in production: Periodically sample agent outputs for quality assessment.

Testing and Evaluation

Agent behavior is probabilistic. Testing requires different approaches:

- Unit test nodes: Test individual functions with known inputs and expected outputs.

- Integration test flows: Run end-to-end scenarios with expected outcomes.

- Evaluation datasets: Create representative inputs with expected outputs. Measure accuracy.

- A/B testing: Deploy new agent versions to subsets of traffic. Compare outcomes.

- Regression testing: When modifying prompts, run evaluation suite to catch quality regressions.

Trends for 2026

The agent framework landscape is converging. Understanding trends helps with strategic decisions:

Multi-Agent Systems Become Standard

Single-agent architectures handle limited complexity. Multi-agent coordination (supervisor pattern, peer-to-peer, hierarchical) is now standard for production workloads. Both LangGraph and CrewAI provide first-class multi-agent support. Expect frameworks to converge on common patterns.

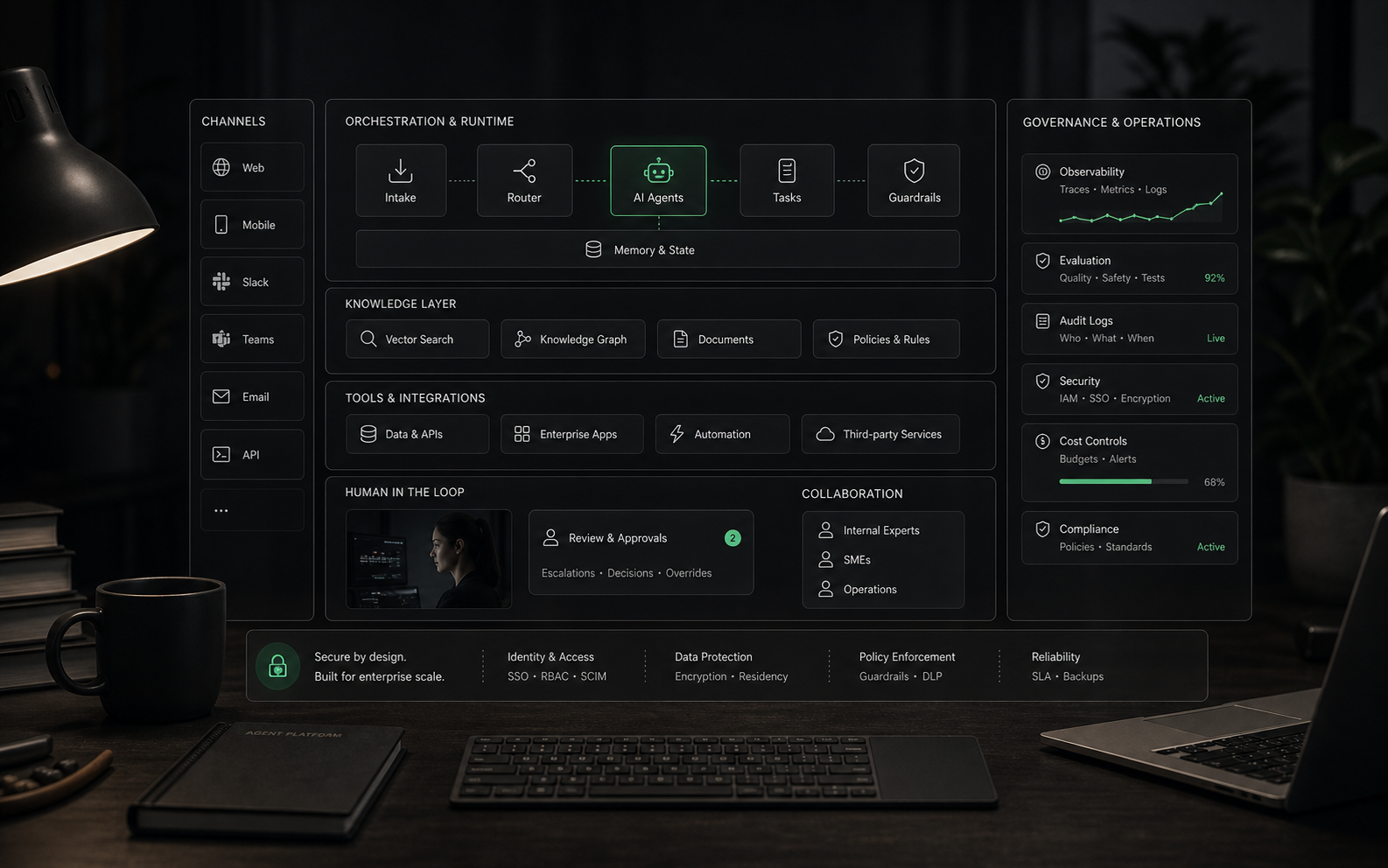

Agent Orchestration Platforms

As agents proliferate, organizations need orchestration layers that manage agent lifecycles, routing, and monitoring. Expect growth in "agent platform" offerings that sit above individual frameworks. Microsoft Agent Framework and enterprise platforms point toward this future.

Framework Convergence

LangGraph, CrewAI, and other frameworks are implementing similar patterns (checkpointing, memory, human-in-the-loop). Core capabilities are becoming table stakes. Differentiation shifts to developer experience, ecosystem, and production tooling.

Model-Agnostic Becomes Default

Frameworks that lock into single model providers lose. LangGraph works with any LLM. CrewAI supports multiple providers. Expect this flexibility to remain essential as models evolve rapidly.

What to Watch

- LangGraph 2.0 ecosystem: LangChain is investing heavily. Expect continued innovation.

- CrewAI Enterprise adoption: If enterprise AMP gains traction, CrewAI becomes viable for large-scale deployments.

- Model providers building frameworks: OpenAI's Agents SDK, Anthropic's framework efforts. Vendor frameworks may lock you in.

- Standardization efforts: As patterns mature, expect standardization of agent interfaces and orchestration.

Sources and References

Verify framework claims and explore further:

FAQ

Common questions

What is the difference between LangGraph and CrewAI?

LangGraph uses a graph-based architecture with explicit state management, ideal for complex workflows requiring fine-grained control. CrewAI uses a role-based agent model where agents are assigned specific roles and collaborate as a crew, making it simpler to start with but less flexible for unconventional workflows.

Is AutoGen still maintained in 2026?

AutoGen entered maintenance mode in 2026. Microsoft now recommends Microsoft Agent Framework (MAF) for new projects, which merged AutoGen v0.4 with Semantic Kernel. Existing AutoGen users should plan migration to MAF.

Which framework is best for production AI agents?

LangGraph and CrewAI are the most production-ready code-first frameworks in 2026. LangGraph excels for complex stateful workflows (used by Uber, LinkedIn, Klarna). CrewAI is better for rapid prototyping and when role-based agent teams match your use case. Enterprise teams should also evaluate Microsoft Agent Framework and Google Agent Builder for managed platforms.

Should I use a code-first or no-code AI agent platform?

Code-first frameworks (LangGraph, CrewAI) offer maximum control and are essential when you need custom logic, unique integrations, or deep observability. No-code platforms (YourGPT, Voiceflow) are faster to deploy for standard use cases like customer support, but trade flexibility for speed. Many teams start with no-code and migrate to code-first when complexity grows.

What is the learning curve for AI agent frameworks?

CrewAI has the shallowest learning curve—developers can build working multi-agent systems in hours. LangGraph requires understanding graph-based state machines but rewards with production-grade control. Microsoft Agent Framework has moderate complexity with excellent documentation. Plan 2-4 weeks for LangGraph proficiency, 1-2 weeks for CrewAI.

Buyer tools

Compare frameworks with your workflow in mind.

Use the methodology to evaluate team capability, use case complexity, integration requirements, and production readiness before committing to a framework.